Here we have just fetched contents from the 5 Wikipedia articles. import wikipedia articles= wiki_lst= for article in articles: print(article) wiki_lst.append(wikipedia.page(article).content)

#SIMILARITY MEASURE CODE#

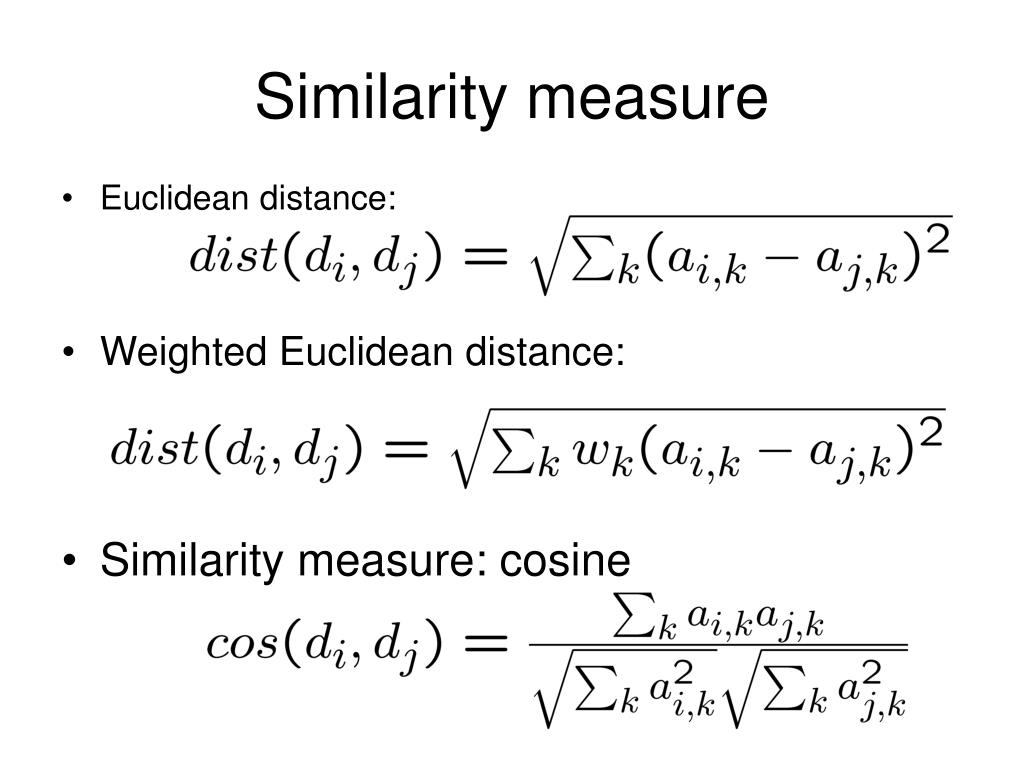

Now we will write short code in Python to map similar Wikipedia articles. If we were to consider the length, then the Euclidean might be a perfect option. Let’s say the word math appeared more in Document 1 than it does in document 2, cosine similarity, in this case, would be a perfect choice as we are not concerned about the length of the document but the content it contains. We usually have documents of uneven lengths as in the case of Wikipedia articles. Let’s take the problem where we need to match similar documents, we first create the Document Term Matrix that contains the frequency of terms occurring in a collection of documents.

We can also use it to rank documents with respect to the given vector of words, CV shortlisting is one of those use cases where we can make use of Cosine similarity. Text mining is one of those areas where we can make use of cosine similarity to map similar documents. The diagram summarizes both the Euclidean and Cosine Metric.Ĭosine looks at the angle between the two vectors ignoring magnitude while the Euclidean focuses on the straight line distance taking into account the magnitude of the vector. This metric is a measure of the angle between x and y as indicated in the diagram and is used when the magnitude of the vector does not matter. This is another metric to find the similarity specifically for the documents. We will look at the example after discussing the cosine metric. To convert this distance metric into the similarity metric, we can divide the distances of objects with the max distance, and then subtract it by 1 to score the similarity between 0 and 1. Greater the distance, lower the similarity between the two objects Lower the distance, higher the similarity between the two objects. This function is computationally more efficient.

You can also use sklearn library to calculate the euclidean distance. Python Function to define euclidean distance def euclidean_distance(x, y): return np.sqrt(np.sum((x - y) ** 2)) This defines the euclidean distance between two points in one, two, three or higher-dimensional space where n is the number of dimensions and x_k and y_k are components of x and y respectively. Euclidean Metricĭo you remember Pythagoras Theorem? Pythagoras Theorem is used to calculate the distance between two points as indicated in the figure below. We will specifically discuss two important similarity metric namely euclidean and cosine along with the coding example to deal with Wikipedia articles. Similarities are usually positive ranging between 0 (No Similarity) and 1 (Complete Similarity). Another important use case would be to segment different customers for marketing campaigns using the K Means Clustering algorithm which also uses similarity measures. One important use case here for the business would be to match resumes with the Job Description saving a considerable amount of time for the recruiter. We can use these measures in the applications involving Computer vision and Natural Language Processing, for example, to find and map similar documents. Many real-world applications make use of similarity measures to see how two objects are related together. Similarity Measures - Scoring Textual Articles